Overview

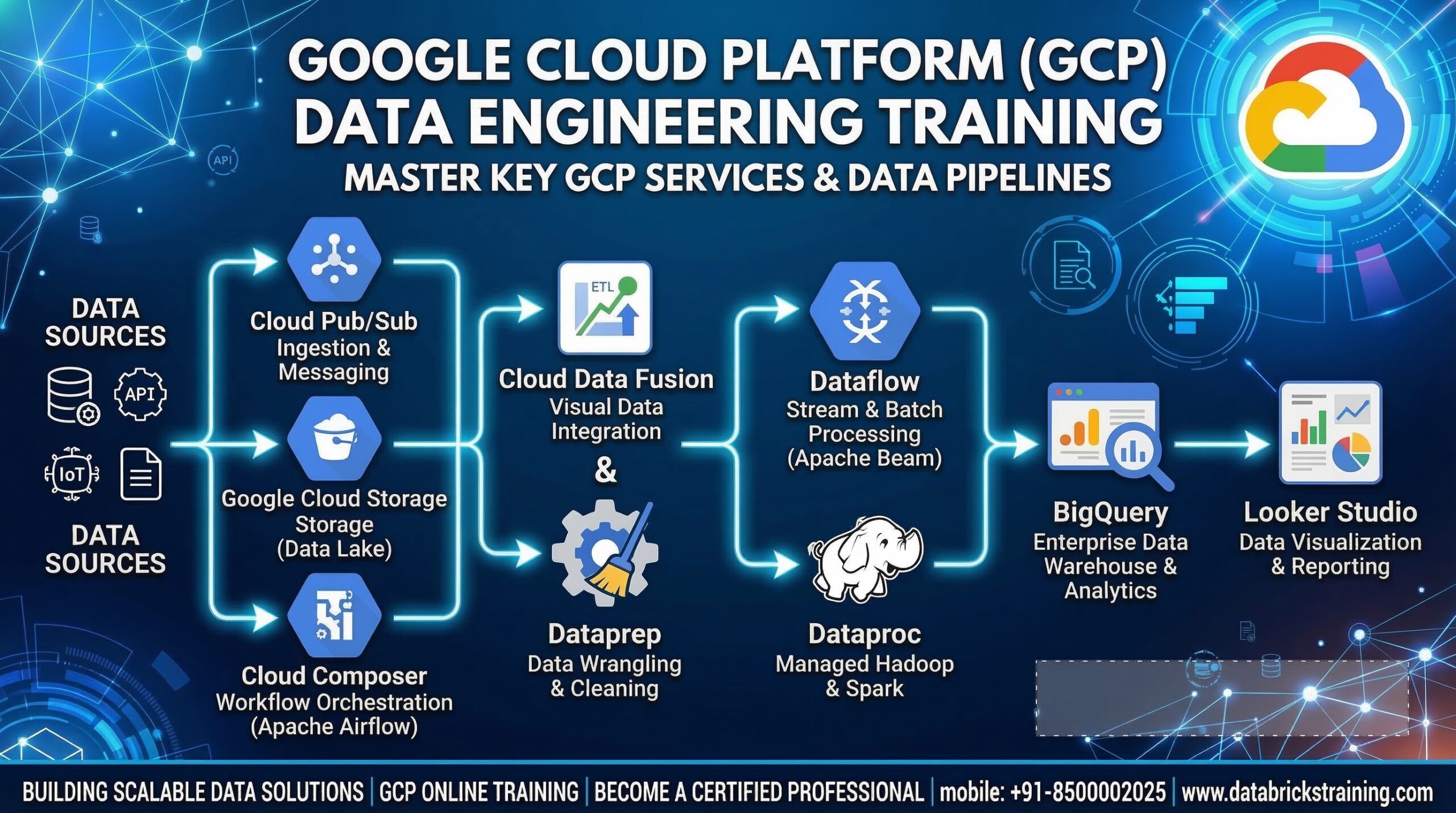

This is a complete hands-on GCP Data Engineering Training covering

all major Google Cloud data services — BigQuery, Dataflow, Dataproc,

Pub/Sub, Cloud Composer, Dataplex, Data Catalog, Datastream and more.

You will build real production pipelines on actual GCP accounts with

hands-on labs, real datasets and industry patterns used at Google,

Deloitte, TCS and Accenture.

What You Will Learn

✅ Build scalable data lakes on Cloud Storage — Bronze, Silver, Gold

✅ Master BigQuery — best practices, optimization, security

✅ Build batch pipelines using Dataproc and PySpark

✅ Build streaming pipelines using Dataflow and Apache Beam

✅ Handle real-time events with Pub/Sub

✅ Implement CDC pipelines using Datastream

✅ Orchestrate pipelines end-to-end with Cloud Composer (Airflow)

✅ Govern data with Dataplex and Data Catalog

✅ Deploy CI/CD using Cloud Build, GitHub Actions and Terraform

✅ Monitor pipelines with Cloud Monitoring and Cloud Logging

✅ Build 3 end-to-end capstone projects for your portfolio

✅ Clear the GCP Professional Data Engineer certification exam

Course Modules at a Glance

Module 1 — Cloud & GCP Fundamentals

Module 2 — GCP IAM & Security

Module 3 — Cloud Storage — GCP Data Lake

Module 4 — BigQuery Core Concepts & Architecture

Module 5 — BigQuery SQL & Advanced Querying

Module 6 — BigQuery Best Practices & Optimization

Module 7 — BigQuery Python & Automation

Module 8 — Cloud SQL — Managed Relational Database

Module 9 — Cloud Spanner — Globally Distributed Database

Module 10 — Cloud Bigtable — Wide Column NoSQL

Module 11 — Pub/Sub — Real-Time Messaging

Module 12 — Dataflow — Unified Batch & Streaming

Module 13 — Dataproc — Managed Spark & Hadoop

Module 14 — Cloud Composer — Pipeline Orchestration

Module 15 — Dataplex — Data Mesh & Governance

Module 16 — Data Catalog — Metadata Management

Module 17 — Datastream — Change Data Capture

Module 18 — GCP Pipeline Architecture Patterns

Module 19 — CI/CD for GCP Data Engineering

Module 20 — GCP Monitoring & Observability

Module 21 — End-to-End Capstone Projects

Module 22 — GCP Professional Data Engineer Exam Prep

Who Is This Course For

👨💻 Developers moving into GCP Data Engineering

🔄 ETL developers upgrading to cloud-native GCP tools

☁️ AWS or Azure engineers adding GCP to their skills

📊 Data Analysts transitioning into Data Engineering

🎓 Graduates targeting Data Engineer roles at top MNCs

🏆 Professionals preparing for GCP Professional Data Engineer exam

Prerequisites

– Basic Python — loops, functions, file handling

– Basic SQL — SELECT, JOIN, GROUP BY

– No prior GCP experience needed — start from scratch

– Laptop with internet — all labs run on GCP free tier

GCP Services Covered

☁️ Cloud Storage — Data Lake

🔍 BigQuery — Serverless Data Warehouse

⚡ Dataflow — Batch & Streaming (Apache Beam)

🖥️ Dataproc — Managed Spark & Hadoop

📨 Pub/Sub — Real-Time Messaging

🗄️ Cloud SQL — Managed PostgreSQL & MySQL

🌐 Cloud Spanner — Global Distributed Database

📦 Cloud Bigtable — Wide Column NoSQL

🎼 Cloud Composer — Apache Airflow

🏛️ Dataplex — Data Mesh & Governance

📋 Data Catalog — Metadata & Discovery

🔄 Datastream — Change Data Capture CDC

🔐 IAM & Secret Manager — Security

📊 Cloud Monitoring & Logging — Observability

🚀 Cloud Build & GitHub Actions — CI/CD

🏗️ Terraform — Infrastructure as Code

3 Capstone Projects

Project 1 — Batch Medallion Data Platform

Cloud SQL → Datastream → GCS → Dataproc →

BigQuery → Composer Orchestration → Looker Studio

Project 2 — Real-Time Streaming Pipeline

Pub/Sub → Dataflow Streaming → BigQuery →

Looker Studio Real-Time Dashboard

Project 3 — Serverless Event-Driven Pipeline

GCS Upload → Cloud Functions → Dataflow →

BigQuery → Dataplex DQ → CI/CD with Terraform

Course Features

- Lectures 245

- Quiz 0

- Duration 10 weeks

- Skill level All levels

- Language English

- Students 0

- Assessments Yes

Curriculum

- 20 Sections

- 245 Lessons

- 10 Weeks

- Module 1: Cloud & GCP Fundamentals for Data Engineers9

- 1.1What is Google Cloud Platform & Why GCP for Data Engineering

- 1.2GCP Global Infrastructure — Regions, Zones, Projects

- 1.3GCP Free Account Setup & Console Overview

- 1.4GCP CLI — gcloud SDK Setup & Configuration

- 1.5GCP IAM — Projects, Service Accounts, Roles & Permissions

- 1.6GCP Billing & Cost Management

- 1.7GCP Python SDK — google-cloud libraries Overview

- 1.8Core GCP Data Services Overview for Data Engineers

- 1.9Lab: Create GCP Project, Set Up gcloud CLI, Create Service Account

- Module 2: GCP IAM & Security for Data Engineers9

- 2.1GCP IAM — Users, Groups, Service Accounts

- 2.2IAM Roles — Primitive, Predefined & Custom Roles

- 2.3Service Account Keys vs Workload Identity Federation

- 2.4IAM Best Practices — Least Privilege Principle

- 2.5GCP Secret Manager — Store & Access Credentials Securely

- 2.6Accessing Secrets from Python — google-cloud-secret-manager SDK

- 2.7VPC & Private Access for Data Services

- 2.8Encryption at Rest & In Transit on GCP

- 2.9Lab: Create Service Account, Assign Roles, Store Secrets in Secret Manager

- Module 3: Cloud Storage — GCP Data Lake13

- 3.1What is Cloud Storage & Core Concepts

- 3.2Buckets, Objects, Folders & Naming Conventions

- 3.3Storage Classes — Standard, Nearline, Coldline, Archive

- 3.4Lifecycle Management Policies — Auto-Transition & Delete

- 3.5Cloud Storage Access Control — IAM & ACLs

- 3.6Signed URLs — Temporary Secure Access

- 3.7Cloud Storage as Data Lake — Bronze, Silver, Gold Layers

- 3.8Partitioning Strategy for Data Lake on GCS

- 3.9Uploading & Downloading Files using Python (google-cloud-storage SDK)

- 3.10Cloud Storage Event Notifications — Trigger Pipelines on Upload

- 3.11Cloud Storage Transfer Service — Migrate Data from AWS S3 / Azure

- 3.12Lab: Build GCP Data Lake on Cloud Storage — Bronze/Silver/Gold

- 3.13Lab: Upload & Read Files from GCS using Python SDK

- Module 4: BigQuery — Core Concepts & Architecture13

- 4.1What is BigQuery & Serverless Data Warehouse Architecture

- 4.2BigQuery Architecture — Storage & Compute Separation

- 4.3BigQuery vs Traditional Data Warehouse

- 4.4Datasets, Tables, Views & External Tables

- 4.5BigQuery Data Types & Schema Design

- 4.6Partitioned Tables — Date, Integer, Ingestion-Time Partitioning

- 4.7Clustered Tables — Improve Query Performance

- 4.8BigQuery Storage Formats — Capacitor (Columnar)

- 4.9Loading Data into BigQuery

- 4.10Exporting Data from BigQuery to GCS

- 4.11BigQuery Sandbox — Free Usage

- 4.12Lab: Create Dataset, Load Data from GCS, Run Queries

- 4.13Lab: Create Partitioned & Clustered Tables

- Module 5: BigQuery — SQL & Advanced Querying16

- 5.1BigQuery SQL — Standard SQL Basics

- 5.2Joins — Inner, Left, Right, Full, Cross

- 5.3Aggregations — GROUP BY, HAVING, ROLLUP, CUBE

- 5.4Window Functions — ROW_NUMBER, RANK, LEAD, LAG, NTILE

- 5.5Subqueries & CTEs — WITH Clause

- 5.6Array & Struct Data Types — Working with Nested Data

- 5.7UNNEST — Flatten Array Columns

- 5.8Date & Timestamp Functions

- 5.9String Functions

- 5.10Regular Expressions in BigQuery

- 5.11BigQuery Scripting — Variables, Loops, IF/ELSE

- 5.12BigQuery Stored Procedures

- 5.13DML Operations — INSERT, UPDATE, DELETE, MERGE

- 5.14Lab: Advanced SQL — Window Functions & Nested Data

- 5.15Lab: MERGE Statement — Upsert Pattern in BigQuery

- 5.16Quiz: BigQuery SQL — 20 Questions

- Module 6: BigQuery — Best Practices & Performance Optimization18

- 6.1BigQuery Pricing Model — On-Demand vs Capacity (Slots)

- 6.2Query Cost Optimization

- 6.3Partitioning Best Practices

- 6.4Clustering Best Practices

- 6.5BigQuery Slots — Understanding Compute

- 6.6BigQuery Reservations — Dedicated Slot Capacity

- 6.7Materialized Views — Pre-Compute Expensive Queries

- 6.8BigQuery BI Engine — In-Memory Query Acceleration

- 6.9Denormalization Strategy — Nested & Repeated Fields

- 6.10Table Expiry & Data Retention Policies

- 6.11BigQuery Audit Logs — Track Query History & Costs

- 6.12Query Plan & Execution Details — INFORMATION_SCHEMA

- 6.13BigQuery Storage Write API — High-Throughput Ingestion

- 6.14Authorized Views — Row & Column Level Security

- 6.15Column-Level Encryption — AEAD Functions

- 6.16Lab: Query Cost Optimization — Before & After Comparison

- 6.17Lab: Materialized Views & BI Engine Setup

- 6.18Lab: Row & Column Level Security with Authorized Views

- Module 7: BigQuery — Python & Automation12

- 7.1BigQuery Python Client — google-cloud-bigquery SDK

- 7.2Running Queries from Python

- 7.3Loading Data into BigQuery from Python

- 7.4Writing Query Results to GCS from Python

- 7.5BigQuery DataFrames — pandas-gbq integration

- 7.6BigQuery with pandas & PyArrow

- 7.7Parameterized Queries — Prevent SQL Injection

- 7.8BigQuery Jobs API — Async Query Execution

- 7.9BigQuery Table Management from Python

- 7.10BigQuery Storage Read API — Fast Parallel Reads

- 7.11Lab: Full ETL Pipeline GCS → BigQuery using Python

- 7.12Lab: Automate BigQuery Table Management with Python

- Module 8: Cloud SQL — Managed Relational Database14

- 8.1What is Cloud SQL & Supported Engines — MySQL, PostgreSQL, SQL Server

- 8.2Cloud SQL vs On-Premise Database

- 8.3Cloud SQL Instance Setup & Configuration

- 8.4High Availability — Primary & Standby Instance

- 8.5Read Replicas — Scale Read Workloads

- 8.6Cloud SQL Backups & Point-in-Time Recovery

- 8.7Connecting Cloud SQL — Private IP vs Public IP

- 8.8Cloud SQL Auth Proxy — Secure Connections

- 8.9Connecting Cloud SQL from Python — sqlalchemy & pg8000

- 8.10Cloud SQL as Source in Dataflow & Dataproc Pipelines

- 8.11Cloud SQL Change Data Capture — CDC for Pipelines

- 8.12Cloud SQL → BigQuery via Datastream — Real-Time Sync

- 8.13Lab: Set Up Cloud SQL PostgreSQL, Connect from Python

- 8.14Lab: Cloud SQL CDC to BigQuery using Datastream

- Module 9: Cloud Spanner — Globally Distributed Database11

- 9.1What is Cloud Spanner & Use Cases

- 9.2Spanner vs Cloud SQL — When to Use What

- 9.3Spanner Architecture — TrueTime & Global Consistency

- 9.4Spanner Instances, Databases & Tables

- 9.5Spanner Schema Design — Interleaved Tables

- 9.6Spanner SQL — Standard SQL with Extensions

- 9.7Spanner Read & Write from Python — google-cloud-spanner SDK

- 9.8Spanner Change Streams — CDC for Real-Time Pipelines

- 9.9Spanner → BigQuery Integration

- 9.10Spanner Pricing — Processing Units

- 9.11Lab: Create Spanner Instance, Insert & Query Data from Python

- Module 10: Cloud Bigtable — Wide Column NoSQL11

- 10.1What is Cloud Bigtable & Use Cases in Data Engineering

- 10.2Bigtable Architecture — Tablets, Nodes & Clusters

- 10.3Row Key Design — Most Critical Concept

- 10.4Column Families & Columns

- 10.5Bigtable vs BigQuery — When to Use What

- 10.6Bigtable vs HBase — Similarities

- 10.7Reading & Writing Bigtable from Python — google-cloud-bigtable SDK

- 10.8Bigtable for IoT, Time-Series & Real-Time Analytics

- 10.9Bigtable → BigQuery — Federated Queries

- 10.10Bigtable Performance — Node Scaling

- 10.11Lab: Create Bigtable Table, Design Row Keys, Read/Write from Python

- Module 11: Pub/Sub — Real-Time Messaging14

- 11.1What is Cloud Pub/Sub & Event-Driven Architecture

- 11.2Pub/Sub vs Apache Kafka — Key Differences

- 11.3Topics, Subscriptions, Publishers & Subscribers

- 11.4Pull vs Push Subscriptions

- 11.5Message Ordering & Exactly-Once Delivery

- 11.6Pub/Sub Lite — Cost-Optimized for High Volume

- 11.7Publishing Messages from Python — google-cloud-pubsub SDK

- 11.8Subscribing & Processing Messages from Python

- 11.9Dead Letter Topics — Handle Failed Messages

- 11.10Pub/Sub Snapshots — Replay Messages

- 11.11Pub/Sub as Source for Dataflow Streaming

- 11.12Pub/Sub + Cloud Storage — Archive Messages to GCS

- 11.13Lab: Publish & Consume Messages from Python

- 11.14Lab: Pub/Sub → GCS — Archive Events with Push Subscription

- Module 12: Dataflow — Unified Batch & Streaming17

- 12.1What is Cloud Dataflow & Apache Beam

- 12.2Dataflow vs Dataproc — When to Use What

- 12.3Apache Beam Programming Model

- 12.4Batch Pipeline with Dataflow — GCS → Transform → BigQuery

- 12.5Streaming Pipeline with Dataflow — Pub/Sub → Transform → BigQuery

- 12.6Windowing in Dataflow

- 12.7Watermarks & Late Data Handling

- 12.8Triggers — When to Emit Results

- 12.9Dataflow Templates — Pre-Built & Custom

- 12.10Dataflow Flex Templates — Containerized Pipelines

- 12.11Dataflow Runner — DirectRunner vs DataflowRunner

- 12.12Dataflow Monitoring — Job Graph & Metrics

- 12.13Dataflow Pricing — vCPU, Memory & Shuffle

- 12.14Lab: Batch Pipeline — GCS → Dataflow (Beam) → BigQuery

- 12.15Lab: Streaming Pipeline — Pub/Sub → Dataflow → BigQuery

- 12.16Lab: Windowed Aggregation — Real-Time Sales Totals

- 12.17Quiz: Dataflow & Apache Beam — 20 Questions

- Module 13: Dataproc — Managed Spark & Hadoop16

- 13.1What is Cloud Dataproc & Use Cases

- 13.2Dataproc vs Dataflow — When to Use Each

- 13.3Dataproc Cluster Types

- 13.4Cluster Configuration — Machine Types & Disk

- 13.5Preemptible VMs — Cost Optimization

- 13.6Submitting PySpark Jobs to Dataproc

- 13.7Dataproc with Cloud Storage — GCS as HDFS

- 13.8Dataproc with BigQuery — Spark BigQuery Connector

- 13.9Dataproc Workflows — Multi-Step Job Orchestration

- 13.10Dataproc Metastore — Managed Hive Metastore

- 13.11Dataproc Serverless for Spark

- 13.12Dataproc on GKE — Run Spark on Kubernetes

- 13.13Dataproc Autoscaling

- 13.14Lab: Submit PySpark ETL Job on Dataproc Cluster

- 13.15Lab: Dataproc Serverless — GCS → Transform → BigQuery

- 13.16Lab: Dataproc Workflow — Multi-Step Pipeline

- Module 14: Cloud Composer — Pipeline Orchestration14

- 14.1What is Cloud Composer & Apache Airflow on GCP

- 14.2Composer vs Cloud Scheduler — When to Use What

- 14.3Composer Architecture — GKE Based

- 14.4Composer 1 vs Composer 2 — Differences

- 14.5DAG — Directed Acyclic Graph Concepts

- 14.6Writing DAGs in Python

- 14.7Airflow Operators for GCP

- 14.8Task Dependencies — Sequential & Parallel

- 14.9Airflow Variables & Connections

- 14.10XComs — Pass Data Between Tasks

- 14.11Airflow Sensors — Wait for File or Condition

- 14.12Composer Monitoring — DAG Runs & Task Logs

- 14.13Lab: Write GCP DAG — GCS Trigger → Dataproc → BigQuery

- 14.14Lab: Orchestrate Full Pipeline with Cloud Composer

- Module 15: Dataplex — Data Mesh & Governance10

- 15.1What is Dataplex & Data Mesh Architecture

- 15.2Dataplex vs Data Catalog — Differences

- 15.3Dataplex Lakes, Zones & Assets

- 15.4Dataplex Data Quality Tasks — Built-In DQ Checks

- 15.5Dataplex Data Lineage — Track Data Movement

- 15.6Dataplex Discovery — Auto-Discover GCS & BigQuery Assets

- 15.7Dataplex Security — Unified Access Control

- 15.8Dataplex with BigQuery & GCS

- 15.9Lab: Create Dataplex Lake, Add GCS & BigQuery Assets

- 15.10Lab: Set Up Data Quality Rules on BigQuery Tables

- Module 16: Data Catalog — Metadata Management12

- 16.1What is Data Catalog & Why Metadata Matters

- 16.2Data Catalog Architecture — Search & Discovery

- 16.3Auto-Discovery — BigQuery, GCS, Pub/Sub Assets

- 16.4Tags & Tag Templates — Business Metadata

- 16.5Tagging BigQuery Tables & Columns from Python

- 16.6Policy Tags — Column-Level Security with Data Catalog

- 16.7PII Column Classification & Access Control

- 16.8Data Catalog Search — Find Datasets Across GCP

- 16.9Data Lineage in Data Catalog

- 16.10Data Catalog Python SDK — google-cloud-datacatalog

- 16.11Lab: Tag BigQuery Tables with Business Metadata using Python

- 16.12Lab: Set Policy Tags for PII Column-Level Security

- Module 17: Datastream — Change Data Capture (CDC)10

- 17.1What is Datastream & CDC on GCP

- 17.2Datastream Supported Sources — MySQL, PostgreSQL, Oracle, SQL Server

- 17.3Datastream Supported Destinations — GCS, BigQuery

- 17.4Datastream vs Debezium — GCP Native CDC

- 17.5Setting Up Datastream — Source, Destination, Stream

- 17.6CDC Events — INSERT, UPDATE, DELETE

- 17.7Datastream → BigQuery — Real-Time Table Sync

- 17.8Datastream → GCS → Dataflow → BigQuery Pattern

- 17.9Handling Schema Changes in Datastream

- 17.10Lab: MySQL → Datastream → BigQuery Real-Time Sync

- Module 18: GCP Data Engineering Pipeline Patterns9

- 18.1Pattern 1 — Batch ELT Pipeline

- 18.2Pattern 2 — Serverless Batch Pipeline

- 18.3Pattern 3 — Real-Time Streaming Pipeline

- 18.4Pattern 4 — CDC Real-Time Sync

- 18.5Pattern 5 — Event-Driven Serverless Pipeline

- 18.6Pattern 6 — Full Medallion on GCP

- 18.7Pattern 7 — Orchestrated Multi-Step Pipeline

- 18.8Lab: Choose & Build One Pattern End-to-End

- 18.9Lab: Terraform — Deploy GCP Data Resources from Scratch

- Module 19: CI/CD for GCP Data Engineering14

- 19.1What is CI/CD in GCP Data Engineering Context

- 19.2Git Branching Strategy — Feature, Dev, QA, Production

- 19.3Cloud Source Repositories — GCP Native Git

- 19.4GitHub & Cloud Build Integration

- 19.5Cloud Build — GCP CI/CD Service

- 19.6Deploy Dataflow Flex Templates via Cloud Build

- 19.7Deploy Dataproc Jobs via Cloud Build

- 19.8Deploy BigQuery Schemas — terraform or bq commands

- 19.9Terraform for GCP Data Resources

- 19.10GitHub Actions for GCP Pipelines

- 19.11Unit Testing PySpark with pytest & Great Expectations

- 19.12Data Quality Checks Before Deployment

- 19.13Lab: Cloud Build CI/CD — Push Code → Test → Deploy Dataflow

- 19.14Lab: Terraform — Deploy GCP Data Resources from Scratch

- Module 20: End-to-End GCP Data Engineering Projects3